Data collection: Logistics of a novel conjoint study

- Michael Becker

- Survey , Quantitative methods

- March 2026

Table of Contents

In criminological research, we often focus on the what—the results, the test statistics, and the policy implications. But for those working to collect original data, the how is often where the real battle is won. In a recent project, we investigated a key bottleneck in national security: the decision-making process of data managers.

Specifically, we wanted to know why data managers approve or deny geospatial data requests. This was part of a broader project examining how violent non-state actors could use emerging geospatial technologies to conduct attacks. To do this, we deployed a survey conjoint—a method that forces participants to make trade-offs between competing factors (e.g., the urgency of a request vs. the sensitivity of the data). However, ensuring our study felt “real” to experts meant moving beyond standard survey templates and into custom-built tools and carefully managed data collection pipelines.

The Project Lifecycle: From Idea to Execution

Designing an experimental survey in a security context required a strategic roadmap. Here is how we set up our timeline:

- Conceptual Framework & Design: Translating theories of decision-making and bureaucratic risk assessment into measurable conjoint attributes.

- Infrastructure Development & Stimulus Scripting: Building the programmatic engine in R to generate “authentic” data request forms.

- Platform Integration & Pilot Testing: Stress-testing our stimuli within survey software to identify licensing and technical bottlenecks.

- Regulatory Navigation & IRB Advocacy: Moving past the “standard” application to educate the board on digital methodology.

- Deployment & Data Integrity Management: High-volume collection via MTurk followed by aggressive quality filtration.

- Stakeholder Engagement: Managing the delicate balance of communication with our expert network and institutional gatekeepers.

Five Major Hurdles

Programatically Generating Stimuli

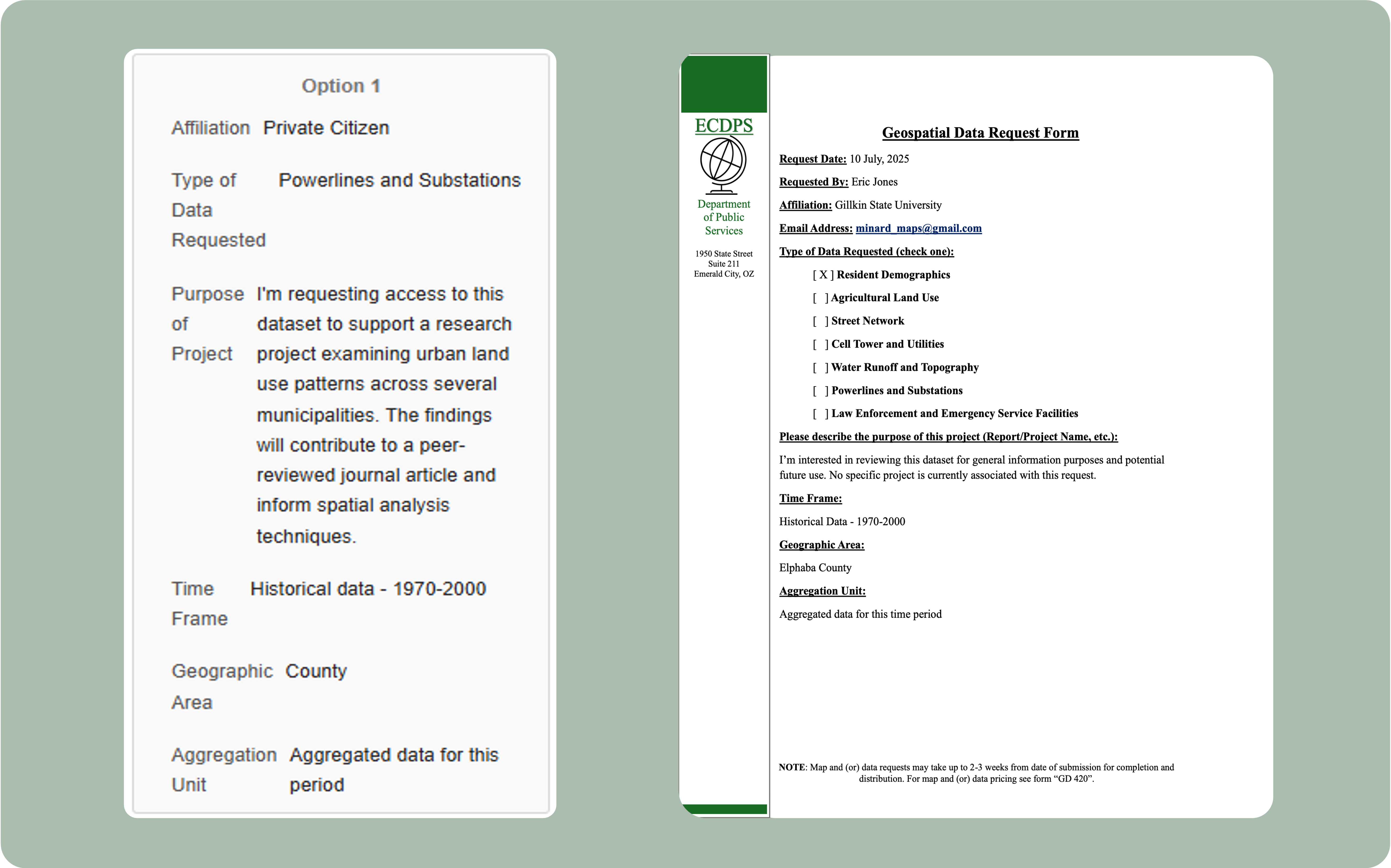

To achieve ecological validity, we couldn’t just list data attributes in a table. We needed participants to view something that looked like a real “Data Request Form.”

- The Hurdle: Ensuring logic-based randomization and high-fidelity factor levels - for example, ensuring that illogical combinations of data wouldn’t be generated or making sure a “risky” request didn’t explicitly refer to criminal activity, but could credibly be seen as concerning to a data manager.

- The Solution: We used R to programmatically generate a random sample of several hundred unique PDF stimuli.

“License Ceilings” in Survey Platforms

Not all survey platforms are created equal, and not all licenses are equal. While Qualtrics is an industry standard, standard academic licenses hit a wall when deployed with complex designs.

- The Hurdle: Limitations in custom JavaScript support and caps on the kinds of loops and “Embedded Data” fields can break a conjoint design that requires these functions.

- The Solution: Designing and deploying an R Shiny Web Application that could satisfy the design requirements, ‘remember’ the logic and displayed stimuli, and would record all user steps to avoid uncertainty in incomplete responses.

Educating the IRB

Our IRB experience wasn’t just a formality; it was an educational campaign.

- The Hurdle: IRB initially assessed that our project could not be approved since the survey was not distributed via Qualtrics - the officially supported platform - and data were going to be stored external to the University systems.

- The Solution: We attended IRB office hours and scheduled separate meetings to walk our assigned analyst through the details of the R Shiny Web Application, data storage system, and the “minimal risk” nature of our project. This further involved reaching out to ITS for a letter of support to demonstrate that we were protecting respondent anonymity while using tools and systems that the board initially viewed as ineligible.

The “Speeder” Epidemic: Signal vs. Noise

This was perhaps our most impactful challenge. Our project was designed as a thoughtful, 5-10-minute deep dive. We originally planned to collect respondents from 3 sources: Amazon MTurk (general population), a practitioner-oriented professional organization, and an academically-oriented professional organization (each focused on geospatial data and topics). This issue was particularly concerning on MTurk.

- The Hurdle: We collected an initial group of “superspeeders” on MTurk - participants who “completed” the entire 10-minute complex conjoint in under 20 seconds. This clearly did not reflect meaningful engagement with the stimulus since it was difficult to even click through all of the pages in under 20 seconds.

- The Solution: We established “hard-stop” rules for data retention that we communicated to all potential respondents (here, 3 minutes or more for a valid response). This resulted in us throwing out nearly all of our MTurk data to preserve the integrity of the study. If a respondent hadn’t substantively engaged with the stimulus, their “decision” wasn’t data - it was noise.

Managing the Expert Pipeline

Maintaining a productive relationship with professional network partners and gatekeepers is an ongoing task.

- The Hurdle: Expert practitioners and professional society contacts rarely operate on academic timelines. Months-long IRB review cycles, semester-based project rhythms, and the speed of institutional approvals can erode the goodwill of busy professionals who agreed to participate early in the project. Without sustained, low-effort communication, even committed contacts can disengage or become difficult to re-mobilize when data collection finally begins.

- The Lesson: Professional stakeholders and contacts at academic and professional societies operate on different timelines than academia. Keeping these “Expert Points of Contact” engaged requires constant reciprocity and a “low-friction” communication style that respects their operational constraints.

Conclusion

If this project taught us anything, it’s that modern criminological research must be dynamic. Here, that meant being part coder, part data detective, and part diplomat. The hurdles weren’t just about the “math” of the conjoint; they were about the logistics of getting high-quality data into the hands of researchers who can use it—navigating software constraints, institutional gatekeepers, reluctant platforms, and inattentive respondents along the way. The science is only as good as the process that produced it.

Disclaimer:

This material is based on work supported by the U.S. Department of Homeland Security under Basic Ordering Agreement 70RSAT21G00000002/70RSAT23FR0000114.The views and conclusions included here are those of the authors and should not be interpreted as necessarily representing the official policies, either expressed or implied, of the U.S. Department of Homeland Security.